This article will focus on the qualities of RTDs vs Thermocouples and their typical applications and principles.

Measuring temperatures in industrial engineering is a key part of monitoring the operation of the various mechanical, electrical, and electronic systems, determining their performance, and evaluating their health.

Likewise, in chemical processes, temperature and heat control may be crucial in achieving the desired end product. Additionally, the same applies to welding, heat treatment processes, industrial ovens used for plastic shrinking, and a wide range of other possible applications. That said, measuring temperatures is neuralgic in a wide range of engineering operations, and doing so with accuracy and precision is equally important.

While there are many types of thermometers that can be used for measuring temperatures including the familiar “mercury” thermometers, the bimetallic, and the vapor pressure thermometers, those that are most commonly and widely used in the industrial environment are the resistance temperature detectors (RTD) and the thermocouple devices. Both of these thermometer types have their respective advantages and drawbacks, and they are both suitable and valuable for a distinctively different set of applications.

Working Principle of Resistance Thermometers (RTD)

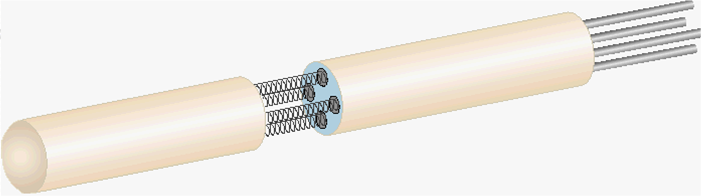

The RTD temperature sensor is based on the temperature dependence of the electric resistance of metals. As the temperature increases for metal, so does its electrical resistance. Of course, there’s an intrinsic coefficient of resistance for all materials, and a positive value for this coefficient makes specific materials better than others for the measurement element role. With nickel and platinum, for example, there is near-perfect linearity that is introduced by their respective coefficients, resulting in high accuracy and precision across repeated measurements. Moreover, nickel and platinum are generally chemically stable and extremely resistant to corrosion.

You must be logged in to post a comment.